System Development with Python

Week 9 :: Advanced OO

Old and New classes

Python is an evolving language. As of Python 2.2, we have old-style and new-style classes. The distinction disappears in Python 3.

New-style classes were introduced to unify user defined classes and built-in types.

This allows the extension of built-in types, for instance

To make a new-style class, derive from any other new-style class, or object

class C(object):

# this is new style class

class D():

# this is an old style class (in Python 2)

You almost always want new-style classes.

Some newer features of the language such as properties, super(), etc. do not work with old-style classes.

Subclassing

class Shape(object):

def __str__(self):

return "Shape:{}".format(self.__class__.__name__)

class Rectangle(Shape):

def __init__(self, width, height):

assert(width > 0)

assert(height > 0)

self.width = width

self.height = height

def area(self):

"""returns the area of this Rectangle"""

return self.width * self.height

class Square(Rectangle):

def __init__(self, length):

self.width = length

self.height = lengthSquare inherits the area method from Rectangle

Square overrides the __init__ method so it only takes a single argument

Does Square still get the validation in Rectangle.__init__?

Referencing the parent classes with super()

When you override a method in a subclass, it masks the definition of parent classes

Most things aren't really hidden in Python, you can reference the parent class definitions by explicit reference:

Square.__init__(self, length, length)

However, there might be multiple parent classes, and this method tightly couples the definition of Square with the definition of Shape.

We want to locate the method using the same traversal as Python using super()

class Square(Rectangle): pass

class MyClass(ParentClass):

def __init__(self):

super(MyClass, self).__init__()super()

super() returns a delegate object, which will delegate method calls to the appropriate class definition

It will return either a bound or unbound method depending on the parameters

super(type, obj) -> bound super object; requires isinstance(obj, type)

super(type) -> unbound super object

super(type, type2) -> bound super object; requires issubclass(type2, type)A bound method is one bound to an instance, just a normal method:

class Foo(object):

def bound_method(self): pass

@classmethod

def unbound_method(): pass

foo = Foo()

foo.bound_method() # method is bound to the foo instance

Foo.unbound_method() # method is not bound

Matching function signatures

In a class hierarchy, super() requires function signatures to match

If subclasses require different arguments, don't specify them explicitly but use variable length argument lists for all definitions, e.g.

def my_method(self, *args, **kwargs)

class Shape(object):

def __init__(self, shapename, **kwargs):

self.shapename = shapename

super(Shape, self).__init__(**kwargs)

class ColoredShape(Shape):

def __init__(self, color, **kwargs):

self.color = color

super(ColoredShape, self).__init__(**kwargs)

cs = ColoredShape(color='red', shapename='circle')

Multiple Inheritance

A class may be derived from multiple base classes

class Combined(Super1, Super2, Super3):

def __init__(self, *args, **kwargs):

Super1.__init__(self, *args, **kwargs)

Super2.__init__(self, *args, **kwargs)

Super3.__init__(self, *args, **kwargs)

multiple inheritance

When calling a method on an object, Python first checks to see if the method name is defined in the class. If not, it follows the Method Resolution Order (MRO) until it either finds it or reaches the top without locating it, which will result in an AttributeError exception being thrown.

The MRO algorithm

- linearizes the search order in a way that preserves the left-to-right ordering specified in each class

- ensures each class only appears once

- is monotonic (meaning that a class can be subclassed without affecting the precedence order of its parents)

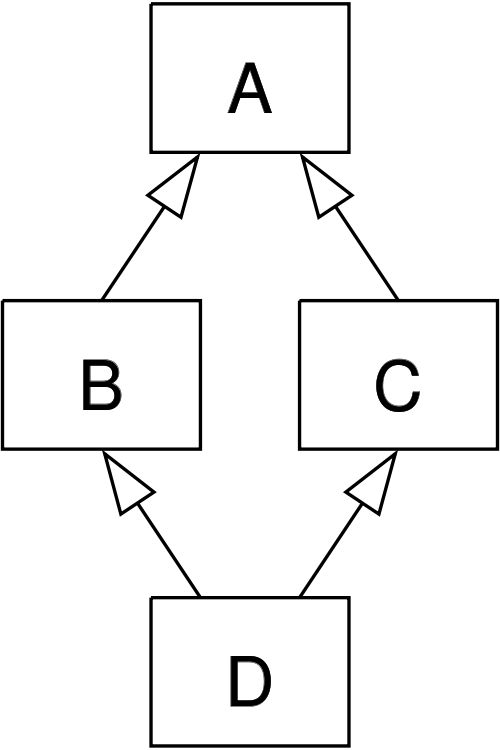

Multiple Inheritance and the Diamond Problem

MRO

The MRO of the previous diagram is [D,B,C,A], and is defined by the C3 linearization algorithm: http://en.wikipedia.org/wiki/C3_linearization

In the previous example, what will D.my_method() produce? Verify your answer with examples/mro.py

Classic classes maintain their original method resolution order, depth first and then left to right.

In simple cases, this will be a good approximation.

In general, children precede their parents and the order of appearance in __bases__ is respected.

Multiple Inheritance

class A(object):

def my_method(self, arg):

print "called A"

class B(A):

def my_method(self, arg):

print "called B"

super(B, self).my_method(arg)

class C(A):

def my_method(self, arg):

print "called C"

super(C, self).my_method(arg)

class D(B, C):

def my_method(self, arg):

print "called D"

super(D, self).my_method(arg)

print D.mro()

Invalid class hierarchies

The MRO algorithm guarantees consistency

But it can't successfully define an order for every configuration

See examples/invalid_mro.py

O = object

class X(O): pass

class Y(O): pass

class A(X,Y): pass

class B(Y,X): pass

class C(A,B): passsuper() Tricks and Trapdoors

- super() delegates calls to next base in __mro__

- argument matching with super() methods will bite you

- last ancestor in inheritance tree should swallow some methods

see /examples/week-03-oo/super/super_last_ancestor_problem.py

- all overriden methods *must* use super()

Other design patterns

Inheritance isn't the only way to share code among classes

Inheritance is a type of a more general design pattern called Delegation

Delegation is a general pattern for allowing one object to 'delegate' calls to another object

Another form of delegation is Composition

Another way to share methods amongst classes is with Mixin classes, which are just regular classes containing generic reusable methods

Both Composition and Mixins are capitalized, but they aren't Python constructs. They are just generic patterns that are easily implemented in Python. See Design Patterns (GoF) for more on design patterns.

Let's look at Mixins and Composition in Python

Mixins

Mixins are regular classes following the Mixin design pattern

They containing methods and properties with the intent of blending them into a new class definition.

class BaseClass(object):

x = 10

class Mixin1(object):

def method1(self):

print "called method1, x = %s" % self.x

class Mixin2(object):

def method2(self):

print "called method2, x = %s" % self.x

class MyClass(Mixin2, Mixin1, BaseClass):

passExercise

In examples/mixins.py, you will find a few Vehicle classes laid out in a hierarchy

The log() method is defined on Vehicle then called on a couple of instances

Modify the class definition for Bike to mix in fancier log() method from LoggingMixin

Does the output change accordingly? If it didn't, look at the MRO for Bike? Is it what you expected?

Initialization

An object is initialized after creation by the __init__ method

class MyInt(int):

def __init__(self, d):

super(MyInt, self).__init__(d)__new__, the object constructor

__new__ is called before an instance is created, and returns an instance. __init__ is called afterwards to initialize the object

Unlike __init__, __new__ is a class method, not an instance method

Called with the same arguments as __init__

Allows for immutable types, where data is set at construction time

Can return new or existing objects of any type

__new__

class MyClass(object):

def __init__(self, *args, **kwargs):

pass

def __new__(cls, *args, **kwargs):

print "creating new class %s" % cls

return super(MyClass,cls).__new__(cls, *args, **kwargs)cls is the class object.

Unlike __init__, it needs to return a class instance – usually by directly calling the superclass __new__

When would you need to use it

__new__ example

Here is a replacement for int which can only take new values between 0 and 255:

class ConstrainedInt(int):

def __new__(cls, value):

value = value % 256

self = int.__new__(cls, value)

return selfExercise

Create a class called "LoDash" that sublasses the immutable "str" type

When new "LoDash" instances are created any underscores ( _ ) with be substituted with string value of your choice

Magic Methods

They all start with and end with '__', and do things like support operators and comparisons, and provide handlers for the object lifecycle.

- __cmp__(self, other)

- __eq__(self, other)

- __add__(self, other)

Also, __call__, __str__, __repr__, __sizeof__, __setattr__, __getattr__, __len__, __iter__, __contains__, __lshift__, __rshift__, __xor__, __div__, __enter__, __exit__, and my personal favorite __rxor__(self,other)......

The list is really long, it's mostly important to get a flavor of how they are used in Python so you can find and implement the right one when you need it. See http://www.rafekettler.com/magicmethods.html for more

Magic Methods and Attribute Lookups

What are the mechanics of a dot-operator attribute lookup? Let's focus on a class instance ( object ):

instance = Klass()

instance.name <- how?

instance.name = 'Bob' <- how?

Which methods provide hooks for overrides?

It can be consfusing

You've probably heard about all of these at some point:

- __getattr__

- __setattr__

- __getattribute__

- __get__

- __set__

Let's look at this more and get a better understanding

NOTE: we will not be looking at class attribute lookups -- those work with metaclasses ( more on that later )

Attribute Storage

Python classes and class instances ( objects ) hold attributes in dictionaries

Class and object dictionaries hold different information

look at /examples/magic_methods/object_attribute_lookups.py

In [2]: c = Container1()

In [3]: c.__dict__

Out[3]: {'name': 'parent'}

In [4]: c = Container2()

In [5]: c.__dict__

Out[5]: {'_name': 'parent'}

In [6]: Container2.__dict__

Out[6]: {

... other attributes ...,

'name': property at 0x7fa9d4b11f70

}

Why does the attribute "name" appear in the Class dictionary in one case and on the instance dictionary in another?

Descriptors

Properties affect where an attribute is stored and how it is bound to the class

They are technically called descriptors

A descriptor is an object which implements certain magic methods in the descriptor protocol to affect binding behavior. Those methods are:

- __get__()

- __set__()

- __delete__()

They are the mechanism behind properties, methods, static methods, class methods

Data and Non-Data Descriptors

There are two kinds of descriptors:

Data descriptors implement the __get__ and __set__ methods

Properties are a data descriptor. They have many forms. See /examples/magic_methods/name_descriptor.py

Non-data descriptors only implement the __get__ method

All functions and class methods are non-data descriptors

Attribute Lookup Workflow

There are two magic methods that handle attribute lookups. They can be overriden:

- __getattribute__

will be called for every dot-operator lookup that happens on your class instance

- __getattr__

if __getattribute__ can't find it, then __getattr__ will be called

NOTE: beware of infinite loops!

__getattribute__ does a lot

What is the resolution workflow of __getattribute__?

if attrname in klass.__dict__.keys():

if ( hasattr( klass.__dict__[attrname], '__get__' ) and

hasattr( klass.__dict__[attrname], '__set__' ) ):

return klass.__dict__[attrname].__get__( instance, klass )

if attrname in instance.__dict__.keys():

return instance.__dict__[attrname]

if attrname in klass.__dict__.keys():

if hasattr( klass.__dict__[attrname], '__get__' ):

return klass.__dict__[attrname].__get__( instance, klass )

else:

return klass.__dict__[attrname]

return klass.__getattr__( attrname )

Notice it's checking for descriptors in the lookup workflow? That's interesting.

let's look at examples/magic_methods/object_attribute_lookups.py

A class is just an object

Objects get created from classes. So what is the class of a class?

The class of Class is a metaclass

The metaclass can be used to dynamically create a class

The metaclass, being a class, also has a metaclass

What is a metaclass?

- A class is something that makes instances

- A metaclass is something that makes classes

- A metaclass is most commonly used as a class factory

- metaclasses allow you to do 'extra things' when creating a class, like registering the new class with some registry, adding methods dynamically, or even replace the class with something else entirely

- Every object in Python has a metaclass

- The default metaclass is type()

type()

With one argument, type() returns the type of the argument

With 3 arguments, type() returns a new class

type? Type: type String Form: <type 'type'> Namespace: Python builtin Docstring: type(object) -> the object's type type(name, bases, dict) -> a new type name: string name of the class bases: tuple of the parent classes dict: dict containing attribute names and values

using type() to build a class

The class keyword is syntactic sugar, we can get by without it by using type

class MyClass(object):

x = 1

OR

MyClass = type('MyClass', (), {'x': 1})

(object is automatically a superclass)

Adding methods to a class built with type()

Just define a function with the correct signature and add it to the attr dictionary

def my_method(self):

print "called my_method, x = %s" % self.x

MyClass = type('MyClass',(), {'x': 1, 'my_method': my_method})

o = MyClass()

o.my_method()

What type is type?

type(type) Out[1]: type

__metaclass__

class Foo(object):

__metaclass__ = MyMetaClassPython will look for __metaclass__ in the class definition.

If it finds it, it will use it to create the object class Foo.

If it doesn't, it will use type to create the class.

__metaclass__ can be defined at the module level

Whatever is assigned to __metaclass__ should be a callable with the same signature as type()

Why use metaclasses?

Useful when creating an API or framework

Whenever you need to manage object creation for one or more classes

For example, see examples/singleton.py

Or consider the Django ORM:

class Person(models.Model):

name = models.CharField(max_length=30)

age = models.IntegerField()

person = Person(name='bob', age=35)

print person.name

When the Person class is created, it is dynamically modified to integrate with the database configured backend. Thus, different configurations will lead to different class definitions. This is abstracted from the user of the Model class.

Here is the Django Model metaclass: https://github.com/django/django/blob/master/django/db/models/base.py#L59

Metaclass example

Consider wanting a metaclass which mangles all attribute names to provide uppercase and lower case attributes

Metaclass example

class Foo(object):

__metaclass__ = NameMangler

x = 1

f = Foo()

print f.X

print f.xNameMangler

class NameMangler(type):

def __new__(cls, clsname, bases, dct):

uppercase_attr = {}

for name, val in dct.items():

if not name.startswith('__'):

uppercase_attr[name.upper()] = val

uppercase_attr[name] = val

else:

uppercase_attr[name] = val

return super(NameMangler, cls).__new__(cls, clsname, bases, uppercase_attr)

class Foo(object):

__metaclass__ = NameMangler

x = 1

Exercise: Working with NameMangler

In the repository, find and run examples/mangler.py

Modify the NameMangler metaclass such that setting an attribute f.x also sets f.xx

Now create a new metaclass, MangledSingleton, composed of the NameMangler and Singleton classes in the examples/ directory. Assign it to the __metaclass__ attribute of a new class and verify that it works.

Your code should look like this:

class MyClass(object):

__metaclass__ = MangledSingleton # define this

x = 1

o1 = MyClass()

o2 = MyClass()

print o1.X

assert id(o1) == id(o2)

Reference reading

/